Crossposted to Daily Kos

Stanley Milgram began his

research into obedience in the early 1960's. His original intent had been to demonstrate that "just following orders" wasn't a legitimate excuse for Nazis who committed atrocities during the holocaust.

It was his belief that only a select few people would engage in acts which could serious harm to others when ordered to do so. His belief was shared by

the students he polled.

They were wrong.

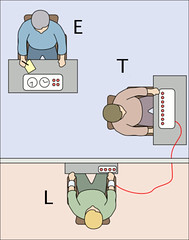

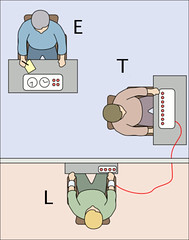

Milgram's experiment was a simple one that involved three people:

- the Authority Figure/Experimenter (E);

- the Technician/Teacher (T);

- the Learner (L);

The experiment was set up as follows:

"E" would show up in a white coat and explain to two individuals that one of them would be playing the part of the teacher and one would be the learner and explain the rules. Then he would hand a slip of paper to each one. One would say "Teacher" and the other would say "Learner." The learner (L) would move to another another room and the teacher (T) would stay with the Experimenter.

Then they would get to work.

The Teacher would, through a microphone, read a question to the Learner. If the Learner got the question wrong, T would administer a shock. Each time the shock was administered, T would increase the voltage a little for the next time and L would scream in pain.

The dial went up to "450 volts." In many cases, this was marked as "DANGER" or "LETHAL."

The thing is, this experiment was a ruse. The "Learner" was part of the experiment, an actor along with the Authority figure. No one was shocked. No one was in pain. L wasn't being tested.

T was.

The idea of the experiment was to discover what our limitations are in terms of what we'd be willing to do to harm another, and how authority can influence those limitations. I'll get to the results soon, but first I have to explain something:

In social psychology, we talk about

Diffusion of Responsibility, a problem that often occurs when people don't feel adequately responsible for the circumstances around them. Having an authority figure available to tell us what to do provides an immense amount of diffusion of responsibility.

In Milgram's experiment, E didn't use threats or cajole. If T didn't want to engage in the experiment, the experimenter would first say "please continue." If that failed, the next statement would be that "the experiment requires that you continue." If that didn't do the trick, E would say that "it is absolutely essential that you continue," and finally, "you have no other choice, you must go on."

If T still refused after those four statements, the experiment would end.

If the experiment didn't end through refusal, it would end after three "shocks" at the maximum level of 450.

There were no threats to E. There was no danger. No loss to refusal. It was merely those statements on the part of the experimenter.

It's easy for us to look at this and think, "I wouldn't ever go that far." It's easy for us to say "I'd never do that."

But the fact of the matter is, in Milgram's work and studies that have replicated it have shown a remarkable consistency: more than 60% of the sample has stuck with the study until the very end, even though they believed at the time that they might be doing serious harm to another human being.

So yes, I'd love to be able to say "I'd never do a thing like that." But I know enough about psychology and self-deception to understand fully well that I can't be certain how I'd behave if faced with such a dilemma. On the surface, it seems like a no-brainer and I honestly can't conceive of doing anything but walking out. But I don't know that I'm that much different from so many people who go along with the experimenter. I don't know that I'm better than they are and I don't know that I'm that strong a person.

I hope I am.

But I'm also fine with

not knowing that I'm one of that 60+% who would buckle under the dread of the words "it is absolutely essential that you continue."

So.

Now you know about Milgram's work. Some of you knew all this already. Some of you didn't.

But that's not the point of this piece.

The point is to talk about where we go from here.

In 1974, Milgram wrote an article for "Harpers," "

The Perils of Obedience:"

The problem of obedience is not wholly psychological. The form and shape of society... have much to do with it. There was a time, perhaps, when people were able to give a fully human response to any situation because they were fully absorbed in it as human beings. But as soon as there was a division of labor things changed... The breaking up of society into people carrying out narrow and very special jobs takes away from the human quality of work and life. A person does not get to see the whole situation but only a small part of it, and is thus unable to act without some kind of overall direction. He yields to authority but in doing so is alienated from his own actions.

Even Eichmann was sickened when he toured the concentration camps, but he had only to sit at a desk and shuffle papers. At the same time the man in the camp who actually dropped Cyclon-b into the gas chambers was able to justify his behavior on the ground that he was only following orders from above. Thus there is a fragmentation of the total human act; no one is confronted with the consequences of his decision to carry out the evil act. The person who assumes responsibility has evaporated. Perhaps this is the most common characteristic of socially organized evil in modern society.

Let me tell you a story. A woman I know has a son who, in September of 2001, was in his early teens. He was at home with his father, when the first tower fell. They were watching TV at the time, glued to the set.

When the tower fell, his first comment was "cool!"

There was an awkward pause and at first he didn't understand what he'd just said.

Then there was a moment of realization on his part. He looked at his father, confused, and said "wait-- that was real, wasn't it?"

This kid-- a perfectly ordinary kid in so many ways-- no delusions, no dissociative disorders, no disconnect from reality-- said "cool" when one of the towers fell. He said this not because he was mean, or cruel or inhuman.

He said it because it happened on television. And when big, dramatic, things happen on television, they happen because of effects, because of writers, because of cameras and tricks and angles and stunt performers.

I'm going to break from this for a moment, because something big is going on:

As I write this diary, there's a hostage situation over at one of the Clinton campaign offices in New Hampshire. I don't know much more than that. No one seems to know much more at the moment. I wonder how many people watching it are feeling separated from it, and how many are taking it like it's something real and profound. Judging from a quick scan of freerepublic.com (I will not link there), there are definitely people who seem to take it as though it's a game, and something worthy of jokes. I don't mean the sort of jokes that people make when nervous or disturbed. I mean the sort of jokes that people make when they are, in fact, completely separated from humanity.

I don't know what to say about this. I sometimes forget how bad the comments over there can be sometimes, and I shouldn't be bothered by them, but I just find it disturbing. I think we need to find a way to bring these people to light without allowing ourselves to be sucked into their twisted world. I have yet to figure out a way of doing that.

Obviously, I'm not going to be posting this diary at the time I expected to. There's no point at all in posting something like this until the current crisis is resolved, so by the time you're reading all this, we'll all know a lot more about what's going on here.

So, anyway: more from Milgram's article:

I will cite one final variation of the experiment that depicts a dilemma that is more common in everyday life. The subject was not ordered to pull the lever that shocked the victim, but merely to perform a subsidiary task... while another person administered the shock. In this situation, thirty-seven of forty adults continued to the highest level of the shock generator. Predictably, they excused their behavior by saying that the responsibility belonged to the man who actually pulled the switch. This may illustrate a dangerously typical arrangement in a complex society: it is easy to ignore responsibility when one is only an intermediate link in a chain of actions.

I'm going to mention another concept that I've talked about before:

cognitive dissonance -- the condition that exists when our behavior contradicts our beliefs. When dealing with cognitive dissonance we sometimes change our behavior, but we sometimes also change our beliefs.

We do not want to think of ourselves as a country which supports or promotes torture. It contradicts our beliefs. So when we see that we have, in fact, engaged in torture, we have some choices:

- we can change our beliefs to convince ourselves that we think torture is ok;

- we can say "this has to stop" and change our behavior;

- we can say "this has to stop" and then convince ourselves that we've changed our behavior without actually doing it;

- we can say "we oppose torture" and then reclassify everything we do as something that's not torture.

It's not a difficult argument to make that we, as a nation, have adopted a combination of #s 3 & 4. We've not only moved our debate to treat torture as though it is worth a discussion over whether or not it's an acceptable approach, not through an open discussion but through a redefining of torture into something that ignores the reality behind it.

This denial of the reality behind it is so severe that someone who's

experienced torture actually got lectured by, of all people, Mitt Romney on how he defines torture.

Here's the reality as I see it:

- we, as a matter of policy, torture people;

- we, as a matter of sense of self-integrity, don't want to acknowledge that we torture people;

- despite all this, some of us openly acknowledge that we torture.

We need a wave of action about this, pushing our media to reflect a truthful and accurate narrative about this. Therefore, every time we see a "news" article which:

- uses the word "waterboarding" but not the word "torture;"

- describes the act of "waterboarding" as "similuated" drowning;

- references without critique the claim that "we do not torture;"

- references torture on the part of lower-level military personnel without mentioning any higher ups;

- makes any reference to "torture" without acknowledging any history of torture on the part of the US...

we need to write letters. We need to bombard these papers with letters reminding them of the truth. We need to not let them get away with rewriting the narrative to dismiss torture. We need to eliminate diffusion of responsibility by forcing us front and center into the reality of what's gone on.

Research on obedience has shown that we comply easily when we feel removed from the situation. We ignore the reality of things we can not easily control, assuming that someone else will take responsibility. We find it easier to push a button that will kill someone five miles away than to pull a trigger that will kill someone who will look into our eyes. We find it easier to ignore an act of atrocity and pretend it is not our problem than to take responsibility for it.

Torture can only be supported through obfuscation and lies. We will not stop this until every one of us choose to actively challenge these lies and until we push ourselves to not just bemoan the use of torture but fight it,

every of the way. Fight it when someone claims we need it to get information. Fight it when someone pretends it isn't real. Fight it when someone refuses to acknowledge it. Fight it when someone obscures its meaning.

Never.

Stop.

Fighting.

Torture.

If T still refused after those four statements, the experiment would end.

If T still refused after those four statements, the experiment would end.